GOOGLE I/O 2022: THE RECAP OF TALAN

Artificial intelligence at every doorsteps!

Artificial intelligence at the heart of Google's product strategy

Google's annual event was held on May 11-12, 2022, in physics at Mountain View and with an impressive series of news announcements, as it should be.

During the opening keynote, Sundar Pichai (CEO) reminded us of Google’s raison d’être: “to organize the world's information and make it universally accessible and useful.”. He then followed up, with his teams, with a clear and concrete demonstration of their main achievements around their range of services (Translate, Maps, Search, Android, Pixel, Nest, etc.).

It was obvious how much Artificial Intelligence is advancing in the composition and sophistication of their services. We will therefore explore this area in particular.

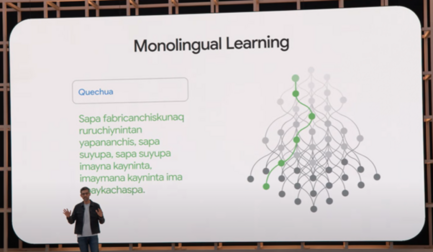

Let us take written and oral simultaneous translation, which is a strong strategic guideline and meets concrete communication and information access needs. Google has worked on machine learning models that move from a bilingual to a monolingual logic. Thus, 24 new languages were added, including Quechua. This theme can be found in the field of assistants, YouTube videos with subtitles generated automatically in 16 languages and augmented reality with the new prototypes of Google Glasses.

Mapping: an ever more immersive user experience engaged in an eco-responsible approach

On the mapping side (Google Maps), it is the reinforcement of Computer Vision models that makes it possible to detect buildings from satellite images. Since July 2020, this has increased the number of buildings identified in Africa by five. More generally, 20% of buildings were detected using these techniques.

A new experience is also added: "immersive view ". It combines 3D and machine learning to better understand a place, in advance. This feature will be available primarily for large cities initially.

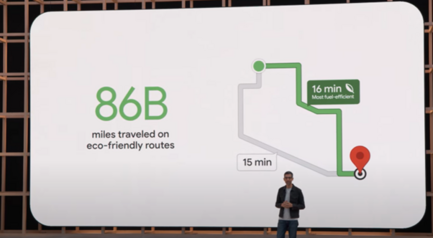

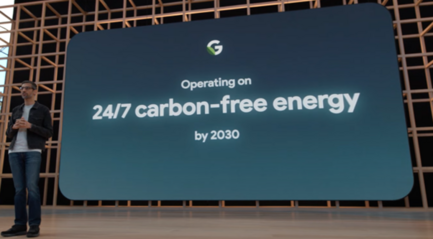

It is also about the carbon footprint of travel and its cost. Choose the most economical and "eco-friendly" route. For example, a longer three-minute route can save 18% fuel. The same applies to Google Flights, which will display the estimated carbon footprint between the different flight options for a destination. Sundar Pichai recalled that, faced with the strong development of their infrastructures, Google is reaffirming its commitment to operate without carbon impact by 2030.

Automated content synthesis and Image Processing

In terms of content synthesis, Google announces that it has advanced its NLP (Natural Language Processing) algorithms to produce summaries of Google Docs and soon of chat conversations, particularly on Google Meet.

Mindful of our video-conference image, Google once again called on Image Processing to improve visual rendering whatever the initial conditions. Studio-like features have been developed. Specific work has been carried out on skin tones in the interests of inclusion and diversity. This aspect can be found in the new features of Google Search.

Search, Google's flagship service

The “any way, anywhere” logic is increasingly sophisticated, beyond the NLP or voice search. The concept of "multi-search & multi-search near me" will add the visual part. It is also a question of enriching this dimension with a "scene exploration" which must make it possible to distinguish from a view, via a mobile for example, which object corresponds to your search criteria (via Google's Knowledge Graph). In order to ensure that machine learning algorithms better take into account the variation in skin tones, Google worked with Harvard University professor Monk, an expert on the subject. The group has made part of its research freely available.

AI Test Kitchen by Google: for more ethical and inclusive artificial intelligence

AI Test Kitchen is an app where people can experiment and give feedback on some of Google’s latest AI technologies. The goal is to learn, improve and innovate together responsibly in AI. This application was designed to anticipate problems related to Artificial Intelligence, such as very erroneous or even offensive results, with a wider audience and in a participatory manner. It uses The MDA 2, Google’s intelligent conversational solution and comes in three conversation options: “Imagine It”, “Talk about it” and “List it”. User feedback will be used to improve the relevance of algorithms.

The end of "OK Google"?

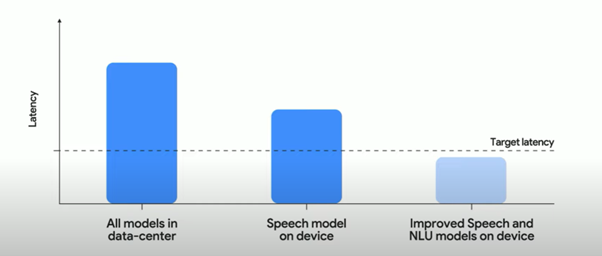

The "assistants" (e.g. Nest Hub Max) will get rid of the systematic "OK Google" via a "look and talk" feature that uses facial and voice recognition, executed on the equipment itself (privacy / latency), to add fluidity to the interaction. Improved NLU (Natural Language Undertsanding) algorithms have been optimized to reduce this interaction time.

Cybersecurity enhanced by artificial intelligence

A long part of the keynote was dedicated to “AI-powered cybersecurity” with the slogan “safer with Google” and which must guarantee every day the protection of users against attacks that are essentially in the form of “phishing” (90%).

Pathways: first results for Google's new AI architecture

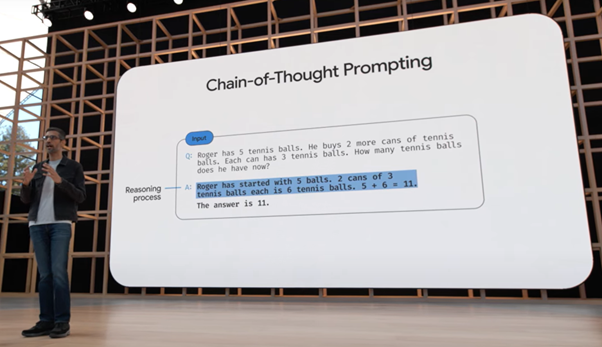

Google presented their new Large Language Model (LLM) called PaLM 540B (Pathways Language Model) which is the first result of “Pathways ”, Google’s new AI architecture. The model and application of a “chain-of-thought” logic (which breaks down a problem) results in better accuracy of results. The aim is to manage a considerable number of tasks in parallel and to learn new tasks very quickly.

New for Android

Google also splashed out on Android, the new mid-range Pixel 6A mobile phone model, the Pixel 7, the highly anticipated Pixel Watch, the smartwatch that can be integrated with Fitbit. Pixel Buds Pro and their premium positioning, etc.

Technology serving people: a vision we share

Google continues to invest heavily in Artificial Intelligence and relies on non-standard infrastructures and very concrete applications. The ease of use of the services is constantly sought by masking the extreme technical complexity. A form of prowess. The inclusion, ethics or biases of AI and sustainability are increasing in line with societal issues.

At the same time, quite remarkably and while it is being used in all areas, metavers have not been mentioned. A perfectly conscious omission on the part of the Group, which thus did not fall into the crosshairs of communication on metaver-stamped creations without any fundamental reflection or anchoring in the real world...

*However, when closing the keynote, Sundar Pichai put the emphasis on the new frontier represented by augmented reality in opposition to a completely virtual world:

These AR capabilities are already useful on phones and the magic will really come alive when you can use them in the real world without the technology getting in the way. That potential is what gets us most excited about AR: the ability to spend time focusing on what matters in the real world, in our real lives. Because the real world is pretty amazing! It’s important we design in a way that is built for the real world — and doesn’t take you away from it. And AR gives us new ways to accomplish this.

Sundar Pichai, CEO, Google

By Nicolas Cambolin, Partner, Global Director Data Intelligence

Photo credits: Google